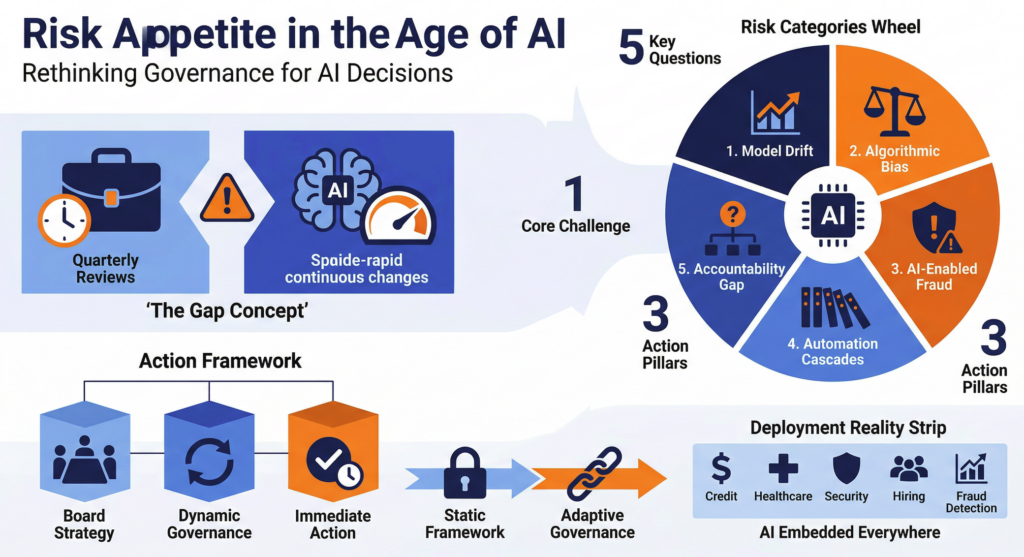

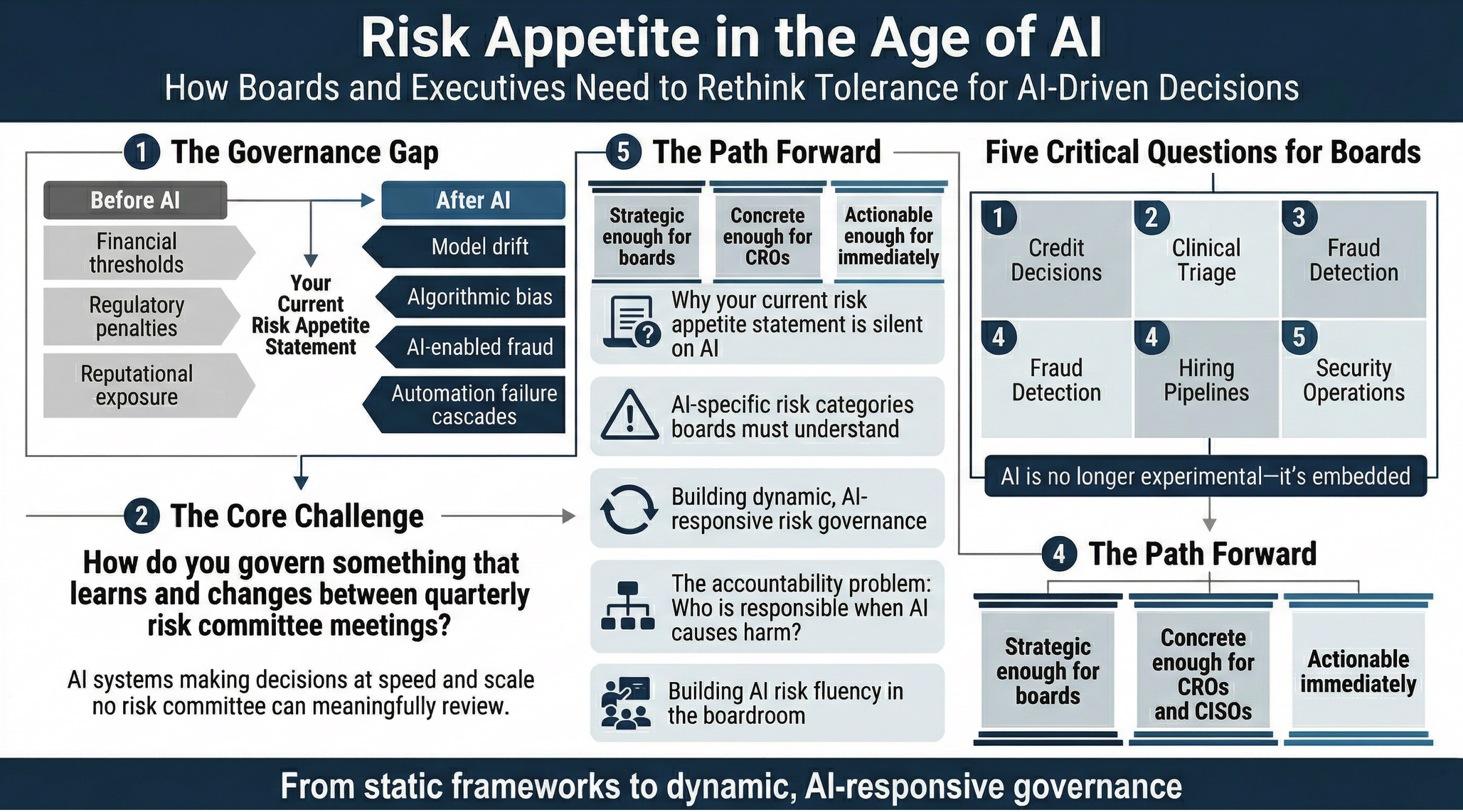

The Governance Gap Nobody Is Naming

There is a quiet crisis unfolding in boardrooms across every sector. Organisations are deploying artificial intelligence at a pace that their governance structures were never designed to match. AI systems are approving loans, triaging security alerts, screening job candidates, generating customer-facing content, and making clinical recommendations — often without meaningful board-level visibility into what those systems are actually doing, how they have changed since they were first approved, or who is accountable when they get something consequentially wrong.

The frameworks that boards rely on to govern organisational risk — risk appetite statements, risk registers, audit cycles, compliance programmes — were built for a world where the systems they oversee are relatively stable and human-operated. An annual review was sufficient when the thing being reviewed did not change between reviews. AI changes that assumption fundamentally. A machine learning model can drift from its original behaviour without any deliberate human action. A generative AI system can produce outputs that no one who approved its deployment anticipated. A vendor can retrain a foundation model your organisation depends on and ship the update silently.

Risk appetite in the pre-AI era was a manageable problem. Boards set tolerances, executives enforced them, auditors verified them. The system was slow, but it was coherent. In the AI era, that coherence is breaking down — and most boards have not yet confronted that fact directly.

What Risk Appetite Actually Means — and Why AI Breaks It

Risk appetite is the amount and type of risk an organisation is willing to accept in pursuit of its objectives. In practice, it is expressed through a risk appetite statement — a document that defines boundaries across categories like financial risk, reputational risk, operational risk, regulatory risk, and increasingly, cyber and information security risk.

The problem is structural. Risk appetite frameworks assume that:

Risk is identifiable before it materialises. You can see the categories of risk your organisation faces, assess their likelihood and impact, and set tolerances accordingly. AI introduces risk categories that are genuinely novel — model drift, algorithmic bias, AI-enabled fraud, automation failure cascades — and that most organisations have not yet built into their risk taxonomy.

Risk is stable enough to govern on a quarterly or annual cycle. A risk committee that meets four times a year can meaningfully oversee a risk landscape that does not change dramatically between meetings. AI systems can change continuously — through retraining, vendor updates, new deployment contexts, or simply through encountering data distributions their training never anticipated. By the time a board reviews an AI system, the system may be materially different from what was approved.

Accountability is clear. When a human makes a decision that causes harm, there is an accountability chain. When an AI system makes a decision that causes harm, that chain frequently dissolves. Was it the data scientists who trained the model? The vendor who supplied it? The executive who approved deployment? The board that set the risk appetite? The absence of clear accountability is not just a governance failure — it is a liability exposure.

Controls are sufficient to manage risk. Traditional risk management relies on controls — preventive, detective, corrective — to keep risk within appetite. But AI systems can fail in ways that circumvent conventional controls entirely. A fraud detection model that has silently degraded does not trigger a control failure in any traditional sense. It simply produces increasingly unreliable outputs, and no alarm sounds.

The Five AI Risk Categories Boards Must Understand

To meaningfully update a risk appetite framework for the AI era, boards and executives need a working understanding of the specific risk categories that AI introduces. These are not speculative future risks. They are present, active, and already materialising in organisations that have deployed AI systems without adequate governance.

Model Drift and Silent Degradation

AI models are trained on historical data. The real world changes. When the data an organisation’s AI system encounters in production diverges significantly from the data it was trained on, the model’s performance degrades — often without any visible failure signal. The system continues to operate. It continues to produce outputs. It simply does so with diminishing accuracy, and often no one notices until the damage is measurable.

This is a governance problem because traditional monitoring is designed to detect system failures, not performance degradation in probabilistic systems. A model that has drifted from 95% accuracy to 78% accuracy has not failed in any conventional sense. It has simply become substantially less reliable — and if that model is approving transactions, triaging alerts, or influencing clinical decisions, the consequences of that degradation compound over time.

The risk appetite implication is that organisations need defined performance thresholds for AI systems — and automated mechanisms to detect when those thresholds are breached and escalate to human oversight. This is not a technical nicety. It is a governance requirement.

Algorithmic Bias and Discriminatory Outcomes

AI systems trained on historical data inherit the patterns, assumptions, and inequities embedded in that data. When historical hiring data reflects decades of systemic bias, a model trained on it will encode and perpetuate that bias — even if no one deliberately programmed discrimination into the system. The same dynamic applies to credit scoring, risk assessment, healthcare triage, and any other domain where AI is being used to make decisions about people.

The governance stakes are significant. Organisations deploying AI systems that discriminate against protected characteristics face legal liability, regulatory enforcement, and severe reputational damage. The EU AI Act explicitly classifies AI systems used in employment, credit, and education as high-risk, with mandatory conformity assessments and documentation requirements.

For boards, the risk appetite question is direct: what is our tolerance for discriminatory outcomes, and how are we verifying that our AI systems are not producing them? If the answer is that this is a matter for the technical team, the governance gap is already material.

AI-Enabled Threat Acceleration

The same AI capabilities that organisations are deploying to improve efficiency and decision-making are available to adversaries — and adversaries are using them at scale. Generative AI enables hyper-personalised phishing at industrial volume. Deepfake audio and video enable social engineering attacks that are indistinguishable from legitimate communication. AI-powered vulnerability scanning enables attackers to identify and exploit security gaps faster than traditional security operations can detect them.

The risk appetite implication is that organisations need to update their threat models to account for AI-accelerated attack vectors. A social engineering control that was adequate when attackers were limited by human bandwidth is inadequate when adversaries can generate thousands of personalised lures per hour. A fraud control calibrated for human fraudsters is inadequate against AI systems that can probe defences continuously and adapt in real time.

This category is particularly acute for boards because the threat surface includes them directly. Deepfake impersonation of executives — board members, CEOs, CFOs — is an active attack vector, not a theoretical one. Boards are targets, not just governors.

Automation Failure Cascades

As AI systems are connected in automated chains — where the output of one system feeds directly into the input of another, and human review is removed or reduced in the interest of efficiency — the potential for failure to propagate and amplify increases dramatically. A single incorrect output from an upstream AI system can cascade through downstream automated processes, causing damage at a speed and scale that human-operated systems could never achieve.

The governance problem is that automation failure cascades can occur between audit cycles, breach risk appetites before any control is triggered, and cause irreversible damage before a human intervenes. The classic response — add more controls — is insufficient when the failure occurs within the automation chain itself, faster than controls can operate.

For boards, this risk category demands a specific governance commitment: human-in-the-loop approval requirements for AI-automated decisions above defined consequence thresholds. The threshold needs to be defined explicitly in the risk appetite framework — not delegated entirely to operational discretion.

Regulatory Non-Compliance and Legal Liability

The regulatory landscape for AI is moving fast and will not slow down. The EU AI Act came into force in 2024 and introduces a risk classification system with staged compliance obligations — prohibited AI practices, high-risk systems requiring conformity assessments, and transparency obligations for general-purpose AI systems. Australia’s voluntary AI Safety Standard articulates ten guardrails with mandatory requirements actively under development. New Zealand’s AI regulatory conversations are accelerating. Sector-specific AI guidance from financial services, healthcare, and critical infrastructure regulators is proliferating.

The compliance risk for organisations is not future-state. Organisations with EU market exposure, with operations in regulated sectors, or with supply chains that touch AI-enabled vendors are already in scope for compliance obligations many have not yet assessed.

For boards, the risk appetite question is: have we assessed our regulatory exposure across all applicable AI-related legislation and guidance, and have we assigned named accountability for compliance in each applicable jurisdiction?

Why Traditional Risk Governance Is Not Sufficient

The instinct in many organisations when confronted with a new risk category is to extend existing governance structures — add AI to the risk register, add AI to the audit scope, assign AI oversight to an existing committee. This instinct is understandable and not entirely wrong. But it misses the structural mismatch between traditional governance cadence and AI system behaviour.

The velocity problem. Traditional risk governance operates on quarterly or annual cycles. AI systems can change state continuously. A vendor updates a model. A drift detection threshold is breached. A new use case for an existing model is approved by a product manager without governance review. By the time these changes surface in a risk committee, they may already have produced consequences. Governance that operates on a quarterly cycle cannot effectively oversee systems that change weekly.

The visibility problem. Effective governance requires visibility. Boards can govern what they can see, understand, and interrogate. Many AI systems — particularly those using complex machine learning models — are opaque even to the technical teams that operate them. A board director without a technical background cannot meaningfully challenge a briefing on a transformer model’s attention mechanism. This is not a failure of intelligence. It is a failure of translation — and the responsibility for that translation sits with management, not with the board.

The accountability problem. Traditional risk governance assigns accountability clearly. Someone is responsible for credit risk. Someone is responsible for operational risk. Someone is responsible for cyber risk. The accountability chain for AI risk is frequently unclear, contested, or simply absent. When an AI system produces a harmful outcome, the question of who is accountable — data science, product, legal, the vendor, the executive who approved deployment, the board that approved the risk appetite — is often unanswered in advance. Unanswered accountability is unmanaged risk.

The measurement problem. Risk appetite requires measurable tolerances. You cannot set a tolerance you cannot measure. Many organisations have not yet defined what measurable AI risk looks like — what metric indicates model drift, what threshold constitutes unacceptable bias, what degree of automation constitutes unacceptable removal of human oversight. Without measurable thresholds, risk appetite statements for AI are aspirational documents, not governance instruments.

What Meaningful AI Risk Governance Looks Like

Rebuilding risk appetite frameworks for the AI era does not require discarding existing governance structures. It requires extending and adapting them in four specific ways.

An AI Asset Register. You cannot govern what you cannot see. The foundational requirement for AI risk governance is a complete, current inventory of every AI system operating in or on behalf of the organisation — including AI embedded in third-party products, SaaS applications, and vendor services. The register should capture what decisions each system makes, what data it processes, who is accountable for it, when it was last reviewed, and what its current performance baseline is. Without this, risk appetite for AI is notional.

Dynamic Risk Appetite Thresholds. The risk appetite statement needs AI-specific risk categories with defined, measurable tolerance levels — not general statements about responsible AI use, but specific thresholds that trigger review. What percentage of performance deviation from a model’s baseline constitutes a breach of appetite? What is the maximum acceptable impact of an automation failure before human escalation is mandatory? What is the organisation’s tolerance for regulatory AI compliance gaps? These thresholds should be tied to automatic monitoring mechanisms, not annual reviews.

Named Accountability Chains. For every AI system that makes consequential decisions, there must be a named individual — not a team, not a committee, not a vendor — who is personally accountable for that system’s performance, its compliance with risk appetite, and its escalation when things go wrong. This accountability should be documented, reviewed annually, and updated when systems or roles change.

Continuous Assurance. Point-in-time auditing is structurally insufficient for AI governance. Organisations need continuous monitoring of AI system performance, control effectiveness, and compliance with risk appetite — with automated alerting when thresholds are breached and defined escalation paths when they are. This is the governance model that AI demands, and it is achievable with current technology. The barrier is not technical. It is organisational commitment.

The Board’s Specific Role

There is a temptation in organisations to treat AI governance as a technical matter — something for the CTO, the CISO, or the data science team to manage, with board oversight limited to high-level briefings. This is a mistake, for reasons that go beyond good governance practice.

Boards have legal duties of care. Directors who approve AI deployment without adequate understanding of the risks those systems carry, and without ensuring that governance structures are fit for purpose, are exposed to personal liability in an increasingly clear regulatory environment. The EU AI Act’s accountability provisions, Australia’s evolving AI safety obligations, and sector-specific regulatory expectations all point in the same direction: AI governance is a board-level responsibility, not a delegation matter.

Boards also have a unique role that no other governance structure can play: they set the tone. When a board demands AI risk literacy — when directors ask hard questions about model performance, about accountability chains, about regulatory exposure — that demand cascades through the organisation. When boards treat AI as a technology footnote, that attitude cascades too.

The practical implication is that boards need AI risk fluency — not technical depth, but sufficient understanding to ask meaningful questions, challenge inadequate answers, and recognise when briefings are substituting reassurance for rigour. That fluency is achievable, and it does not require directors to become data scientists. It requires them to apply to AI the same critical engagement they already apply to financial risk, legal risk, and strategic risk.

Three Things Boards Should Do Before the Next Meeting

The gap between where most boards are and where they need to be on AI governance is real — but it is closeable. Three actions, none of which require significant technical investment, can materially improve an organisation’s AI risk governance posture immediately.

Commission an AI asset audit. Instruct management to produce a complete inventory of AI systems operating in or on behalf of the organisation within sixty days. The output should include what each system does, what decisions it makes, who is accountable, and when it was last reviewed. The existence of this inventory — or the discovery of its absence — is itself a governance signal.

Add AI-specific risk categories to the risk appetite statement. At the next board or risk committee meeting, table a motion to add the five AI risk categories — model drift, algorithmic bias, AI-enabled threats, automation failure, and regulatory non-compliance — to the organisation’s risk appetite framework, with a commitment to define measurable tolerances for each within ninety days. This does not require deep technical expertise. It requires the governance will to name what is currently unnamed.

Assign named accountability. For every AI system that the asset audit identifies as making consequential decisions, require management to nominate a named individual accountable for its governance. That individual should be identifiable by name, contactable, and responsible for reporting to the board or risk committee on a defined schedule. Accountability that lives in a job description but not in a named person is accountability in name only.

Conclusion: The Governance Will Is the Hard Part

The technical infrastructure to govern AI responsibly exists. The frameworks — ISO 42001, NIST AI RMF, the EU AI Act’s compliance architecture — provide workable starting points. The tools for continuous monitoring, automated alerting, and AI performance measurement are available. The barrier to meaningful AI risk governance in most organisations is not technical. It is the organisational will to treat AI governance with the same rigour that organisations apply to financial governance, legal governance, and information security governance.

Risk appetite in the age of AI is not a new compliance obligation to be discharged and forgotten. It is a governance posture to be built, maintained, and continuously updated as AI systems, regulatory requirements, and the threat landscape evolve. Boards and executives who recognise that now — before an AI-related incident makes the recognition unavoidable — will be the ones who can genuinely claim to govern their organisations rather than simply oversee them.

The question is not whether your organisation’s AI systems carry risk. They do. The question is whether your board has decided, explicitly and honestly, how much of that risk it is prepared to carry — and put governance structures in place to ensure it does not carry more than it intended.

Read more blogs – https://www.secsolutionshub.com/adoption-and-governance-of-ai-in-smes/