Building Cyber Risk Metrics: From KPIs to KRIs — Including AI Risks

The question nobody asks before they build the metrics

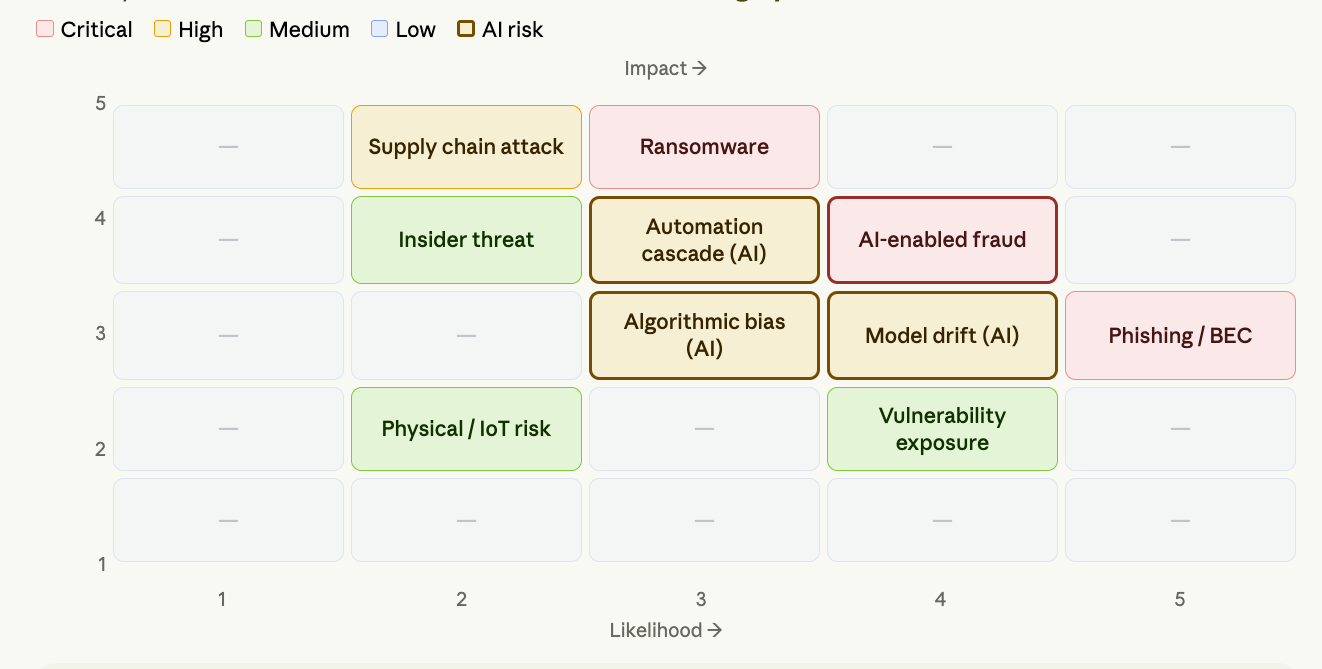

Most organisations jump straight to populating a risk matrix — heat maps, red/amber/green cells, likelihood scores. They treat it as a documentation exercise rather than a governance instrument. The result is a beautifully formatted spreadsheet that nobody consults when a real decision needs to be made. Building a Cyber Risk Matrix that actually works — one that connects Key Performance Indicators (KPIs) to Key Risk Indicators (KRIs), that surfaces AI-specific risks alongside traditional cyber threats, and that gives executives actionable intelligence — requires foundations that most organisations skip. The matrix is the output. The prerequisites are the work.

Security teams measure a great deal. Patch rates, alert volumes, incident counts, training completion percentages, vulnerability scores. Dashboards are full. Reports are long. And yet boards and executives consistently say they do not have the information they need to make risk decisions.

The problem is almost never a shortage of data. It is a shortage of intelligence. Data tells you what happened. Intelligence tells you what it means, whether it is getting better or worse, and what action is required. Most cyber metrics programmes produce data. A cyber risk metrics programme produces intelligence.

The difference is structural. A metrics programme built on the right foundations — starting with risk appetite and working through to named ownership of every indicator — produces intelligence that executives can act on. Built without those foundations, it produces reporting that nobody reads and dashboards that nobody trusts.

This article walks through how to build that programme from the ground up, including where AI risks change the measurement vocabulary and what the prerequisites are before a single metric is defined.

https://legal.thomsonreuters.com/blog/key-risk-indicators-kris-an-overview/

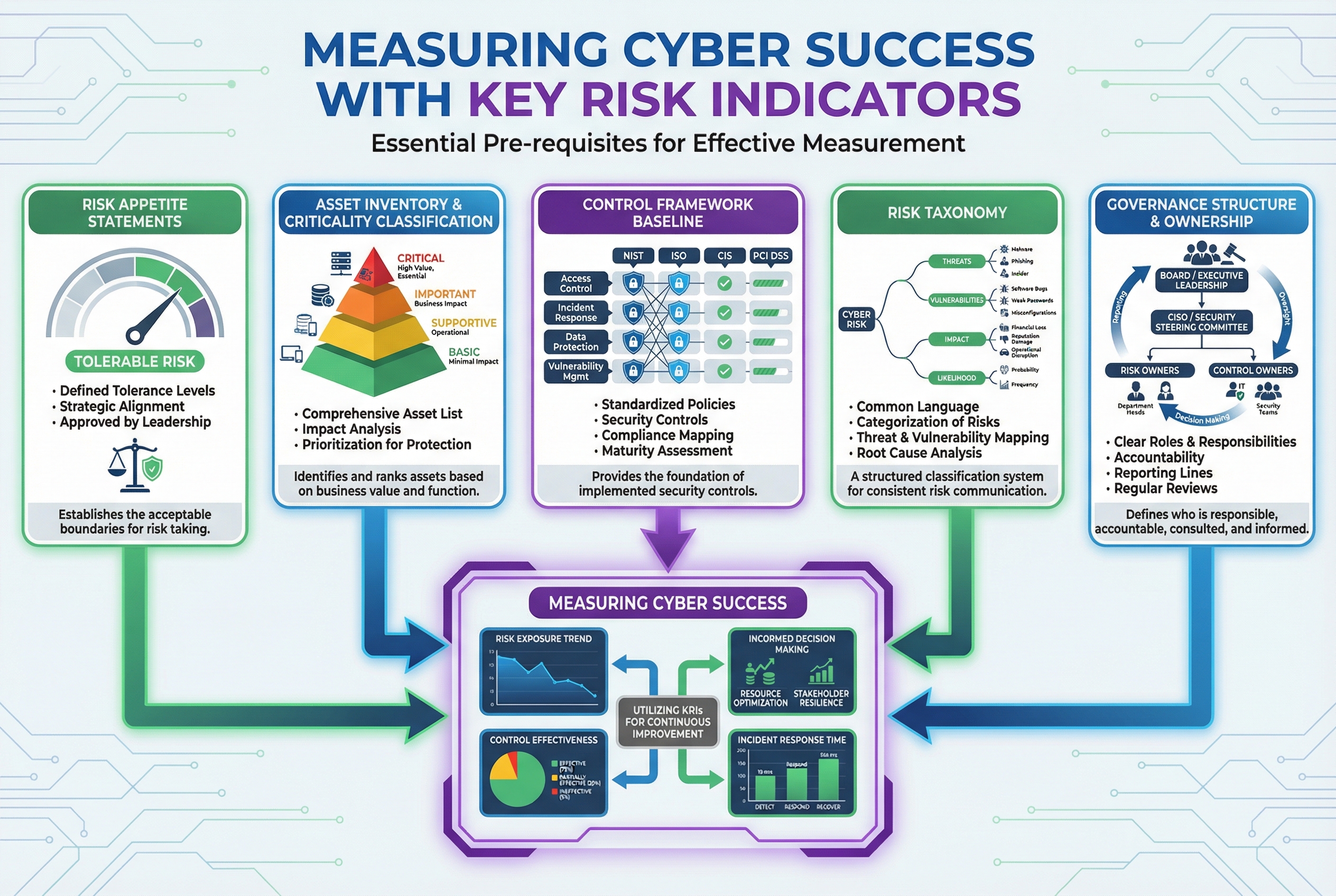

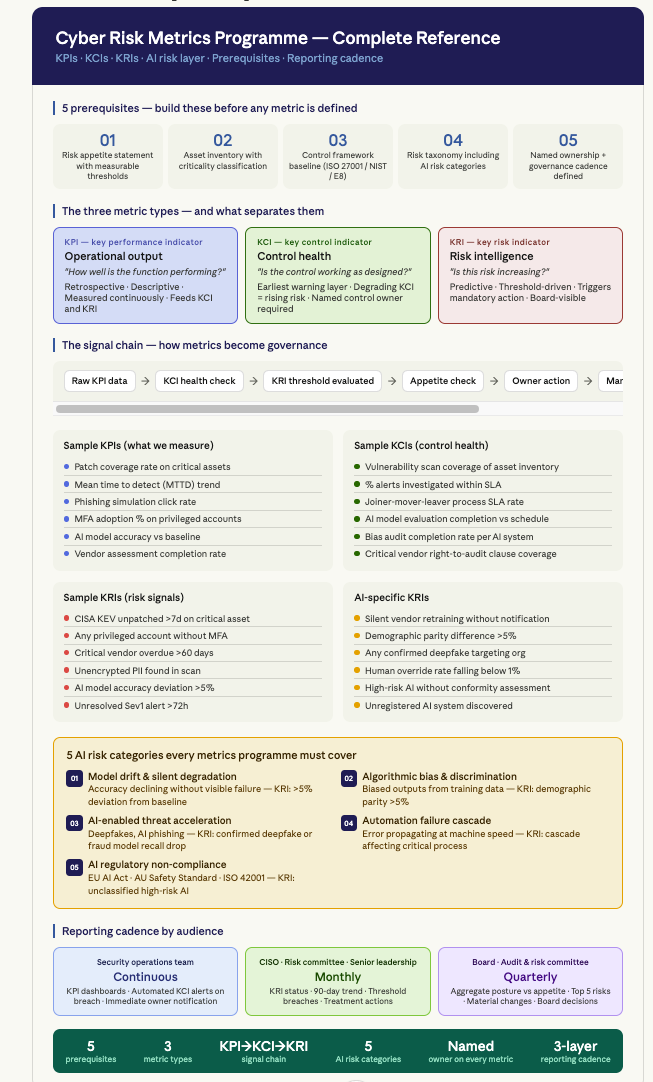

The prerequisites: what must exist before developing the metrics

Part one: the prerequisites

This is the section most practitioners skip. They inherit a set of metrics from a previous team, add new ones when something goes wrong, and never establish the governance foundations that would make the metrics coherent. The result is a metrics programme that measures things because they are measurable, not because they are meaningful.

There are five prerequisites. All five must exist before the metrics programme is designed. If any are absent, build them first — even in rough form — before adding measurement.

Prerequisite 1 — Risk appetite statement

A risk appetite statement defines the amount and type of risk the organisation is willing to accept in pursuit of its objectives. It is the most important input to a metrics programme because it defines the scale. Without it, a KRI (Key Risk Indicator) threshold is arbitrary. You cannot say that a patch lag of 14 days on critical systems is a breach of appetite unless appetite has been defined.

A useful risk appetite statement for cyber and AI risk does four things. It names the specific risk categories the organisation cares about. It defines tolerance thresholds in measurable terms — not “we have low tolerance for data breaches” but “we accept zero tolerance for undetected exfiltration of customer PII.” It assigns named accountability — one person owns each category. And it defines the threshold at which board-level escalation is triggered.

For AI risks specifically, most organisations discover that their existing risk appetite statements say nothing about model drift, algorithmic bias, AI-enabled fraud, or automation failure cascades. That silence must be addressed before AI risks can be properly measured. A KRI (Key Risk Indicator) for model accuracy deviation is meaningless without a defined appetite threshold for how much deviation is acceptable.

Prerequisite 2 — Asset inventory with criticality classification

You cannot measure risk against assets you do not know exist. A current, complete asset inventory — covering on-premises infrastructure, cloud environments, endpoints, applications, AI systems, third-party integrations, and shadow IT — is the foundational data layer for the entire metrics programme.

Criticality classification takes this further. A metrics programme that treats a public marketing website and a core payment processing system as equivalent is not measuring risk — it is measuring activity. Criticality classification assigns a business impact weight to each asset class, which then modifies how risks against those assets are scored and how threshold breaches on their associated KRIs are escalated.

For AI systems, the inventory must go further than traditional asset categories. It needs to capture what decisions each AI system makes, at what volume, and what the consequence of a wrong decision is. An AI system that generates internal content drafts and an AI system that approves financial transactions have categorically different criticality profiles, and their KRIs need different thresholds.

Prerequisite 3 — Control framework baseline

A Key Risk Indicator is a signal that a control is degrading or that a risk is increasing. To measure that signal meaningfully, you need to know what the baseline state of your controls is. That requires selecting a control framework — ISO 27001, NIST CSF, CIS

Controls, Essential Eight, or a sector-specific framework — and conducting a maturity assessment against it.

The baseline serves two purposes. It identifies current control gaps that represent active risk exposures. And it establishes the benchmark against which future KRI measurements can detect regression. A KRI for endpoint protection coverage means nothing without a baseline that defines what 100% coverage looks like for your environment.

Prerequisite 4 — Risk taxonomy

A risk taxonomy is the shared vocabulary of the metrics programme. It defines the risk categories, sub-categories, and event types that the organisation uses consistently across all risk and security reporting. Without it, different teams in the same organisation are measuring different things and calling them by the same names.

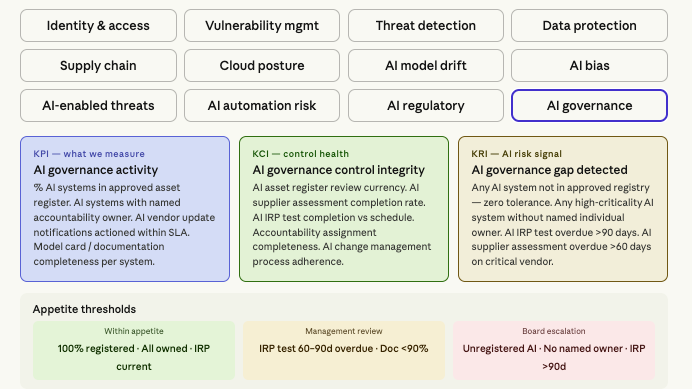

A modern cyber risk taxonomy must include both traditional categories — network security, endpoint security, identity and access, data protection, third-party risk, operational resilience — and AI-specific categories that most traditional taxonomies omit entirely. Model drift, algorithmic bias, AI-enabled threats, automation failure, and AI regulatory compliance all need named places in the taxonomy before they can appear in the metrics programme.

Prerequisite 5 — Named ownership and governance cadence

Every metric in the programme needs a named owner — not a team, not a function, a named individual who is accountable for monitoring the metric, maintaining the associated indicator, and escalating when thresholds are breached. Without named ownership, a metrics programme is maintained by nobody and acted on by nobody.

The governance cadence defines how frequently each layer of the metrics programme is reviewed. Operational metrics should be monitored continuously with automated alerting on threshold breaches. Management-level risk reporting should occur monthly. Board-level metrics summary should be quarterly, supplemented by immediate escalation when a critical KRI threshold is breached.

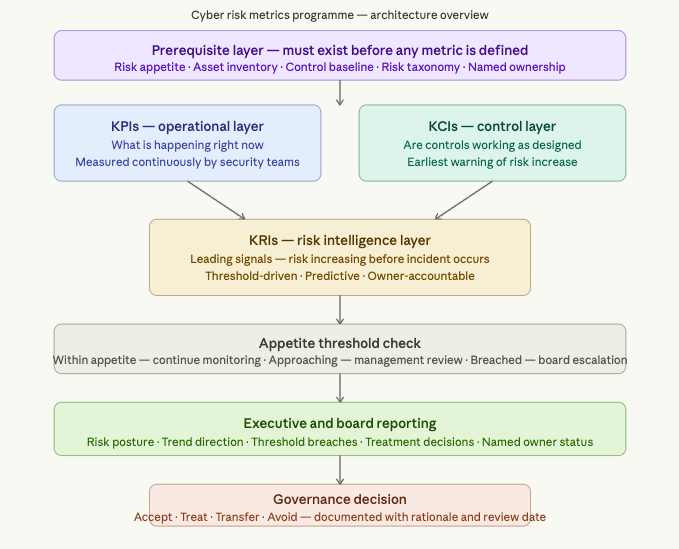

Part two: KPIs, KCIs, and KRIs — understanding the three layers

The three metric types are frequently conflated in practice. Getting the distinction right is not semantic pedantry — it determines whether your metrics programme produces operational reporting or risk intelligence.

A Key Performance Indicator answers the question: how well is the security function performing its activities right now? Patch coverage rate. Mean time to detect. Training completion percentage. These are valuable operational measures, but they are retrospective. They tell you what has happened.

A Key Control Indicator answers the question: is the control that is supposed to manage this risk operating as designed? MFA enforcement rate on privileged accounts. Vulnerability scan coverage as a percentage of known assets. Backup success rate. KCIs are the earliest warning layer in the programme. A KCI degrading is the first signal that a risk the control was managing is becoming more exposed — before the KRI threshold is breached and before an incident occurs.

A Key Risk Indicator answers the question: is a specific risk increasing? It is predictive, threshold-driven, and forward-looking. A KRI is not useful without a defined threshold — the point at which the measurement signals a breach of risk appetite and triggers a mandatory response. Patch lag trending upward is a data point. Patch lag exceeding 14 days on internet-facing critical systems is a KRI breach that demands an immediate owner response.

The signal chain runs in one direction. KPIs provide the raw data. KCIs interpret whether the controls managing risk are healthy. KRIs aggregate the signals into risk intelligence. Appetite thresholds convert that intelligence into governance triggers.

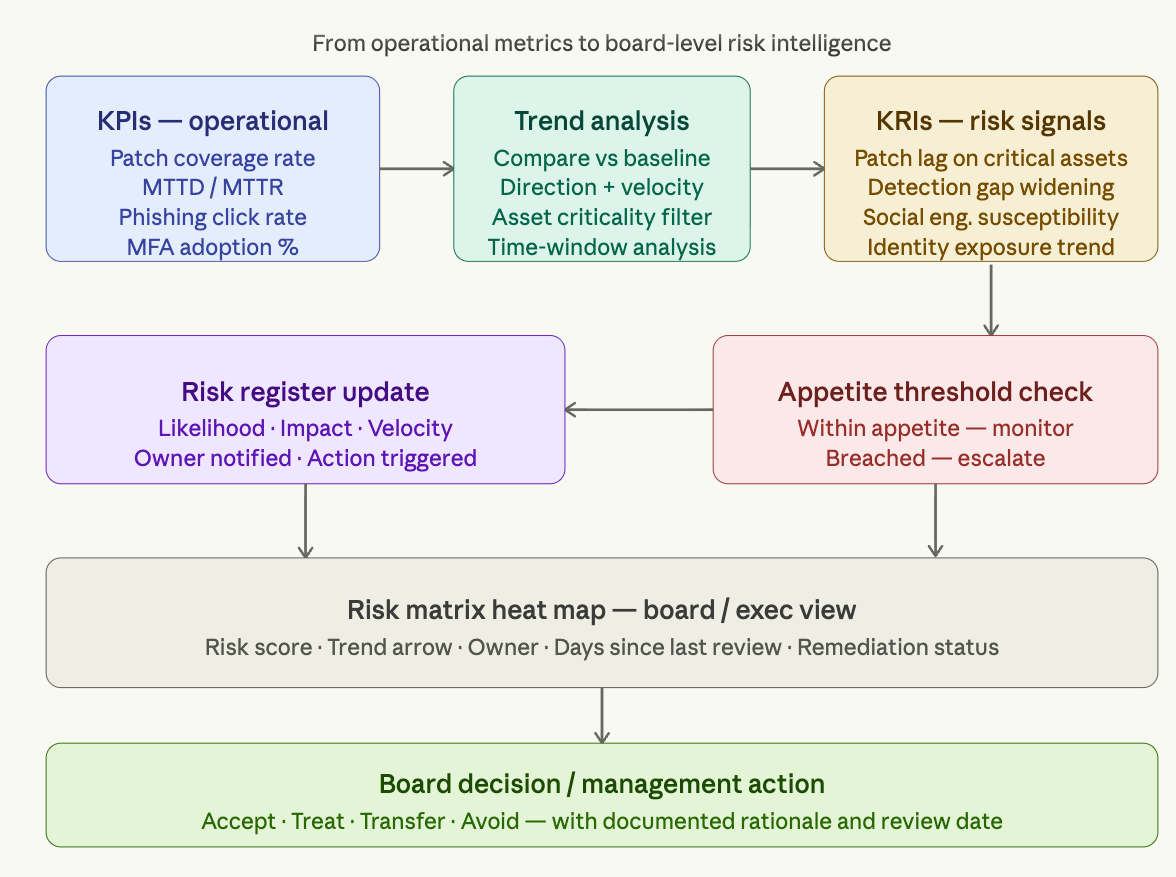

Part three: the KPI to KRI signal chain in practice

Every KRI in a well-designed programme traces back to at least one KPI and at least one KCI. When you design a KRI, you should be able to answer three questions: what KPI data feeds this indicator, what control does degradation of this KRI implicate, and what appetite threshold defines when action is mandatory?

Here is how that works across four example risk areas, from raw operational metric through to governance trigger:

Identity and access — credential compromise risk. The KPI is failed authentication attempts per user per day — a continuous operational measure. The KCI is MFA enforcement rate on privileged accounts, which tells you whether the control designed to mitigate credential compromise is healthy. The KRI is privileged account without MFA combined with failed authentication spike sustained over 48 hours — which signals that the credential compromise risk is actively elevated above appetite. The governance trigger is immediate notification to the identity team lead and escalation to CISO if not remediated within the defined SLA.

Vulnerability management — exploitation risk. The KPI is c

ritical CVE count and remediation time measured against SLA. The KCI is scan coverage as a percentage of the known asset inventory — if coverage is degrading, you have blind spots. The KRI is an EPSS-scored vulnerability above 0.7 unpatched on an internet-facing critical asset beyond the defined remediation window — which combines exploitability, criticality, and time into a single risk signal. The governance trigger is mandatory board notification if the window exceeds 30 days.

AI model drift — decision quality risk. The KPI is model accuracy measured against the baseline on a defined evaluation schedule. The KCI is the frequency and completeness of model performance evaluations — if evaluations are being skipped or delayed, the control is failing. The KRI is model accuracy deviation exceeding 5% from the established baseline on a high-criticality AI system — which signals that the AI system is making increasingly unreliable decisions in production. The governance trigger is immediate review by the platform lead and risk notification to the accountable executive.

Third-party risk — supply chain exposure. The KPI is vendor security assessment completion rate against the defined annual cycle. The KCI is the percentage of critical vendor contracts with right-to-audit clauses — a structural control that enables the assessment programme to function. The KRI is any critical vendor overdue for assessment by more than 60 days — which signals that a material dependency is operating without current assurance. The governance trigger is escalation to the risk committee.

The next diagram shows the full KPI-to-KRI flow — how raw operational metrics translate into risk signals and ultimately into board-level decisions:

Part four: the reporting architecture

A metrics programme is only as valuable as the reporting structure that sits around it. Metrics that are measured but not reviewed are a compliance exercise. Metrics that are reviewed but not acted on are an audit trail. Metrics that drive decisions are a governance programme.

The reporting architecture for a cyber risk metrics programme has three distinct layers, each serving a different audience with a different cadence.

The operational layer serves the security team and is continuous. Automated dashboards track KPIs in near-real-time. KCI thresholds are monitored with automated alerting — a KCI breach triggers immediate notification to the named owner, not a weekly report. This layer is not about reporting up. It is about enabling the security team to act before a KRI threshold is breached.

The management layer serves the CISO, risk committee, and senior leadership team. Monthly reporting covers the current state of all KRIs, trend direction over the past 90 days, any threshold breaches in the period and the actions taken, and the status of previously agreed treatment actions. This is where risk owners report on their assigned indicators and where escalation decisions are made.

The board layer serves directors and the audit and risk committee. Quarterly reporting covers the aggregate risk posture relative to appetite, the five highest-rated risks and their trend direction, any material changes since the last board report, and the status of board-approved risk treatment decisions. This should be no more than two to three pages — not a metrics catalogue but a risk narrative supported by key numbers.

For AI risks, the reporting architecture requires one additional element: a triggered report outside the normal cadence. When an AI system is materially changed — vendor retraining, new deployment context, significant architecture update — the associated KRIs should be immediately re-evaluated and the result reported to the management layer within a defined window. The quarterly cycle is insufficient for AI systems that can change state between meetings.

Here is the full interactive cyber risk matrix

For AI risks specifically, the reporting cadence must be more responsive than traditional quarterly cycles. An AI system that has undergone vendor retraining, that has been deployed in a new use context, or that has produced a bias audit finding is a changed risk — and the risk register should reflect that change within days, not quarters.

The maturity journey: where most organisations actually are

Most organisations that believe they have a functioning cyber risk matrix are actually in one of three early maturity states. They have a risk register but no KRIs — the register is updated when someone remembers to update it, not when a threshold is breached. They have KRIs but no appetite thresholds — the indicators are measured but there is no defined point at which a measurement constitutes a problem. Or they have both but no named owners — risks are tracked but nobody is accountable for doing anything about them.

The AI risk dimension makes this maturity gap more acute, because AI systems introduce a rate of change that manual risk management processes cannot keep pace with. A quarterly risk review cycle is adequate for stable infrastructure. It is inadequate for an AI model that a vendor retrains monthly.

The path to a genuinely functional cyber risk matrix — one that includes AI risks, connects KPIs to KRIs, and gives boards actionable intelligence — is a maturity journey of roughly five stages: from ad hoc (no structured risk register), through defined (documented but not measured), managed (KPIs measured but not risk-weighted), optimised (KRIs with appetite thresholds and named owners), to continuous (automated monitoring with real-time board visibility). Most organisations sit between defined and managed. The gap between managed and optimised is where this framework operates.

Conclusion: metrics without foundations are noise

The organisations that get the most value from a cyber risk metrics programme are not the ones with the most metrics. They are the ones that have done the prerequisite work — defined their appetite, built their taxonomy, established baseline control maturity, and assigned named ownership — and then built a lean, threshold-driven measurement system on top of that foundation.

The AI layer does not require a separate framework. It requires additional KPIs, KCIs, and KRIs, new risk categories in the taxonomy, and a governance cadence that is responsive enough to track systems that change between quarterly reviews. The discipline is identical. The vocabulary is new.

A useful test for any metrics programme is to ask three questions about every metric it contains. Does it have a defined threshold that distinguishes acceptable from unacceptable? Does it have a named individual who is accountable for acting when that threshold is breached? And does it connect to a risk appetite statement that explains why this metric matters to the organisation?

If the answer to any of those three questions is no, the metric is producing data, not intelligence. And data without intelligence is not risk management — it is record-keeping.

Read more blogs from our broad range of blog articles